AI Agents Series · Part 2 of 3 · Cybersecurity · April 2026

For thirty years, finding software vulnerabilities required exceptional human expertise — years of specialised knowledge, weeks of focused effort, and a combination of intuition and pattern recognition that was genuinely rare. In 2026, AI has compressed that timeline to hours. It is finding bugs that survived for decades in widely-used software. And it is doing it at a scale that changes the entire security landscape — for defenders and attackers alike.

This is not a future scenario. It is happening right now, and the implications reach every business that runs software — which is every business.

The Moment Everything Changed

Daniel Stenberg leads the development team behind cURL, a 30-year-old open-source data transfer tool used in everything from cars to medical devices. In early 2026, something shifted in the quality and quantity of security reports he was receiving.

With one click, AI had flagged over 100 bugs in his code that had survived rounds of review by humans and traditional code analysers. By three months into 2026, his team had found and fixed more vulnerabilities than in each of the previous two full years combined.

Stenberg’s experience is not unique. Across the software community, developers are reporting the same thing: AI models got dramatically better at finding bugs, almost overnight, following the release of new cutting-edge models in late 2025.

How AI Finds Bugs That Humans Miss

Understanding why AI is so effective at vulnerability discovery requires understanding why humans miss bugs in the first place.

Human security researchers are pattern-matchers working at human speed. They are exceptionally good at finding classes of vulnerabilities they have seen before, in code structures they recognise, in the limited portion of a codebase they can hold in working memory at once. They miss things for three reasons: scale (millions of lines of code), novelty (new vulnerability patterns they haven’t seen), and cognitive load (attention degrades over time).

AI addresses all three simultaneously. It scans millions of lines in minutes. It identifies structural patterns associated with vulnerability classes regardless of whether it has seen that exact pattern before. And it does not get tired.

What AI Bug-Finding Agents Can Do in 2026

- Scan entire codebases at a speed and depth no human team can match — in minutes, not weeks

- Identify logic flaws that traditional pattern-based scanners miss because they require contextual reasoning, not just pattern matching

- Find zero-days — previously unknown vulnerabilities — in production systems, including operating systems and browsers

- Chain vulnerabilities — identifying how multiple individually low-severity flaws can be combined to achieve full system compromise

- Reproduce and verify discovered vulnerabilities, reducing false positives that waste security teams’ time

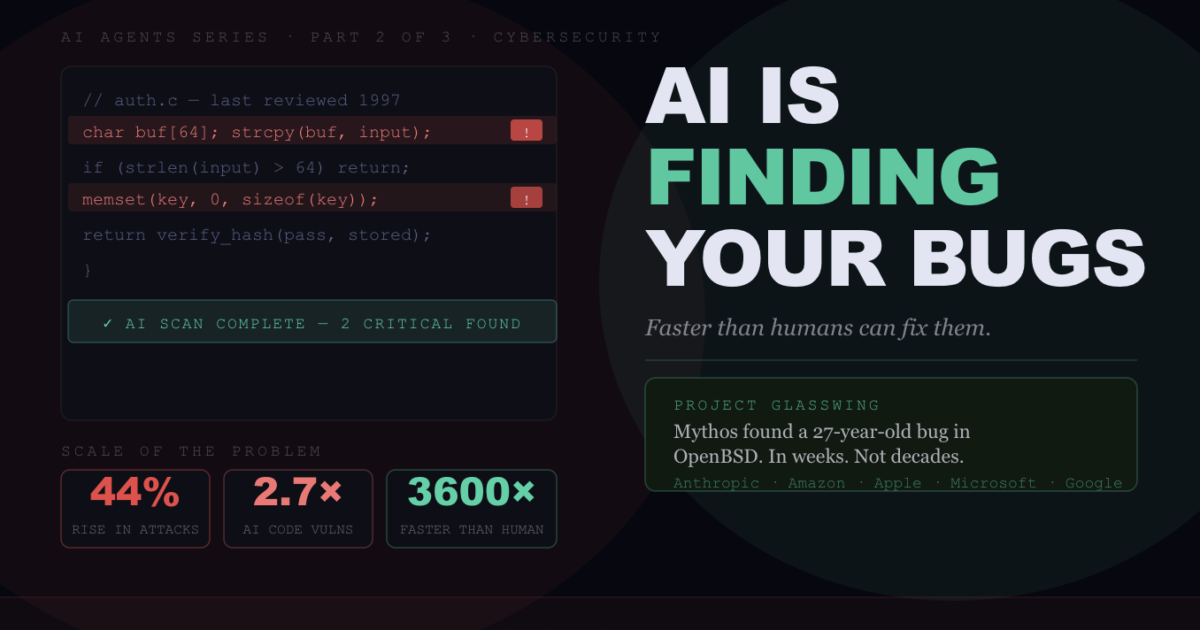

- Operate at up to 3,600× human speed on specific vulnerability discovery tasks (CAI framework, peer-reviewed)

Project Glasswing — The Most Important AI Security Story of 2026

In April 2026, Anthropic announced Project Glasswing — a major cybersecurity initiative involving Amazon, Apple, Microsoft, Google, and Cisco. At its centre: Claude Mythos Preview, a specialised security-focused AI model.

The findings from Mythos Preview are extraordinary. The model found a 27-year-old vulnerability hiding in OpenBSD — a system specifically designed to be hard to break. It found a 16-year-old bug in FFmpeg that had survived five million automated test runs by traditional tools. It found ways to chain vulnerabilities in the Linux kernel to achieve complete machine control. All of this happened in a few weeks.

Mythos Preview has now been reported to have discovered thousands of high-severity zero-day vulnerabilities in every major operating system and web browser. The security community’s reaction is a mixture of relief and alarm — relief because these vulnerabilities are being found by defenders rather than attackers first, alarm because the capability is now clearly real and replicable.

“AI is more effective than humans at finding software bugs because it can quickly scan thousands of lines of code and detect problems, something people are not necessarily good at. Humans are the weakest link in security.”

— CBS News, April 11, 2026 (reporting on Project Glasswing)The Double-Edged Reality

Every capability that makes AI effective at finding vulnerabilities for defenders also makes it effective for attackers. This is the uncomfortable reality that security leaders are grappling with in 2026.

The window between a vulnerability being discovered and a script being written to exploit it has shrunk to near-zero. In 2026, a zero-day vulnerability is often weaponised within minutes of discovery because automated systems are faster than any human security team’s response time. The IBM X-Force Threat Intelligence Index reported a 44% rise in attacks exploiting application vulnerabilities in 2026.

The Security Paradox — AI as Both Shield and Sword

- AI agents can identify up to 77% of vulnerabilities in real-world software systems — better coverage than human red teams

- But less than 1% of discovered vulnerabilities have been patched so far — the backlog is enormous

- AI-generated code has 2.7× higher vulnerability density than human-written code, according to SQ Magazine 2026

- CVSS 7.0+ critical vulnerabilities appear 2.5× more often in AI-generated code than human-written code

- 93% of organisations now use AI-generated code in development workflows — but only 12% apply the same security standards to it as traditional code

- Hackers are using AI to craft highly personalised phishing attacks, making social engineering attacks significantly harder to detect

AI-Generated Code — The New Security Liability

The security paradox runs deeper than offensive vs defensive use of AI scanning tools. AI-generated code itself is introducing vulnerabilities at scale.

AI coding tools like Claude Code and GitHub Copilot are now used in 93% of enterprise development workflows. The productivity gains are real and significant. But the security implications are only now being fully understood. AI-generated code has 2.7× higher vulnerability density than human-written code. By June 2025, AI-generated code was adding more than 10,000 new security findings per month across studied repositories — a 10× jump from December 2024.

The core problem is architectural: AI models optimise for functional correctness — does the code do what was asked? — not for security properties — does the code introduce attack surfaces? These are different objectives, and current models are not reliably trained to balance both simultaneously.

What This Means for Your Business

Every business that writes or uses software — which means every business — needs to recalibrate its security posture based on three changes:

Vulnerability discovery is no longer the bottleneck. The bottleneck is now remediation. AI can find vulnerabilities faster than security teams can patch them. The priority is building automated remediation pipelines that can respond at machine speed, not just detection capabilities. Less than 1% of AI-discovered vulnerabilities have been patched so far — that gap is a critical business risk.

AI-generated code requires different security standards. If your developers are using AI coding assistants — and 93% of them are — you need security review processes that specifically account for the higher vulnerability density of AI-generated code. Only 12% of organisations currently do this. That is not sustainable as AI-generated code becomes a larger share of your production codebase.

The attack window has compressed to near-zero. Patch management processes designed for monthly or weekly cycles are no longer adequate. If an AI agent identifies a critical vulnerability in your software today, threat actors may have an automated exploit ready within hours. Machine-speed defence requires machine-speed patching for critical severity findings.

The Verdict

AI’s ability to find software bugs faster than humans is one of the most consequential developments in cybersecurity in decades. It is transforming the threat landscape in both directions simultaneously — enabling defenders to find and fix vulnerabilities at unprecedented scale, while enabling attackers to discover and weaponise them faster than ever before.

The organisations that will navigate this well are those that treat AI-powered vulnerability discovery as infrastructure — not a one-off audit — and build the remediation capacity to match the discovery speed. The organisations that will struggle are those that either ignore the new AI-powered attack surface or deploy AI scanning without the operational capacity to act on what it finds.

FAQ

How is AI finding software bugs faster than humans?

AI agents scan millions of lines of code at speeds humans cannot match, identify structural patterns associated with vulnerability classes regardless of novelty, and do not suffer from the attention degradation or cognitive load limits that cause human reviewers to miss things. Research shows AI agents can operate up to 3,600× faster than humans on specific vulnerability discovery tasks, and 11× faster on average.

What is Project Glasswing?

Project Glasswing is a major cybersecurity initiative announced by Anthropic in April 2026, involving Amazon, Apple, Microsoft, Google, and Cisco. Its Claude Mythos Preview model found thousands of high-severity zero-day vulnerabilities in every major operating system and web browser, including a 27-year-old OpenBSD vulnerability and a 16-year-old FFmpeg bug that had survived five million automated test runs.

Is AI-generated code less secure than human-written code?

Yes, significantly. AI-generated code has 2.7× higher vulnerability density than human-written code, and critical vulnerabilities (CVSS 7.0+) appear 2.5× more often. By mid-2025, AI coding tools were adding over 10,000 new security findings per month. 93% of enterprises use AI-generated code, but only 12% apply equivalent security standards to it.

What should businesses do about AI-powered vulnerability discovery?

Three priorities: build automated remediation pipelines to match discovery speed (less than 1% of discovered vulnerabilities have been patched); apply dedicated security review processes to AI-generated code in your development workflow; and compress your patch management cycle for critical findings — attack windows have shrunk to hours, not days or weeks.

Sources: NPR/OPB (April 11, 2026), CBS News (April 11, 2026), The Hacker News, Medium/Trends24, SQ Magazine, Cycode, IBM X-Force Threat Intelligence Index 2026, CAI Research Paper (arXiv), CyberScoop RSAC 2026 · April 2026 · clusters.media · Part 2 of the Clusters Media AI Agents Series