San Francisco, April 2026. Six thousand five hundred executives, founders, and investors descended on the Moscone Center for HumanX — the AI industry’s biggest annual gathering. For three years running, one name had dominated the conversation at events like this. This year was different.

The name on everyone’s lips was not ChatGPT. It was not OpenAI. It was Claude.

Across panels, booths, and side conversations, one company kept coming up: Anthropic. The chatbot that enterprise leaders had been quietly preferring for months had now become something more than a product. According to one of the most prominent CEOs in the room, it had become a belief system.

“It has become a religion, that’s the level of that mania. Everybody, if you go and ask them today, ‘Hey, if I gave you one AI tool, what tool would you want?’ The answer would be Claude.”

Arvind Jain runs Glean, one of the most widely deployed enterprise AI platforms in the world. He is not prone to hyperbole. When he reaches for the word “religion” to describe what is happening around Claude, he is describing something that marketers spend decades trying to create and almost never achieve: a product that people don’t just use, but evangelise.

How We Got Here — The Rise of Claude

To understand how completely the narrative has shifted, you need to understand where Anthropic was standing just 15 months ago.

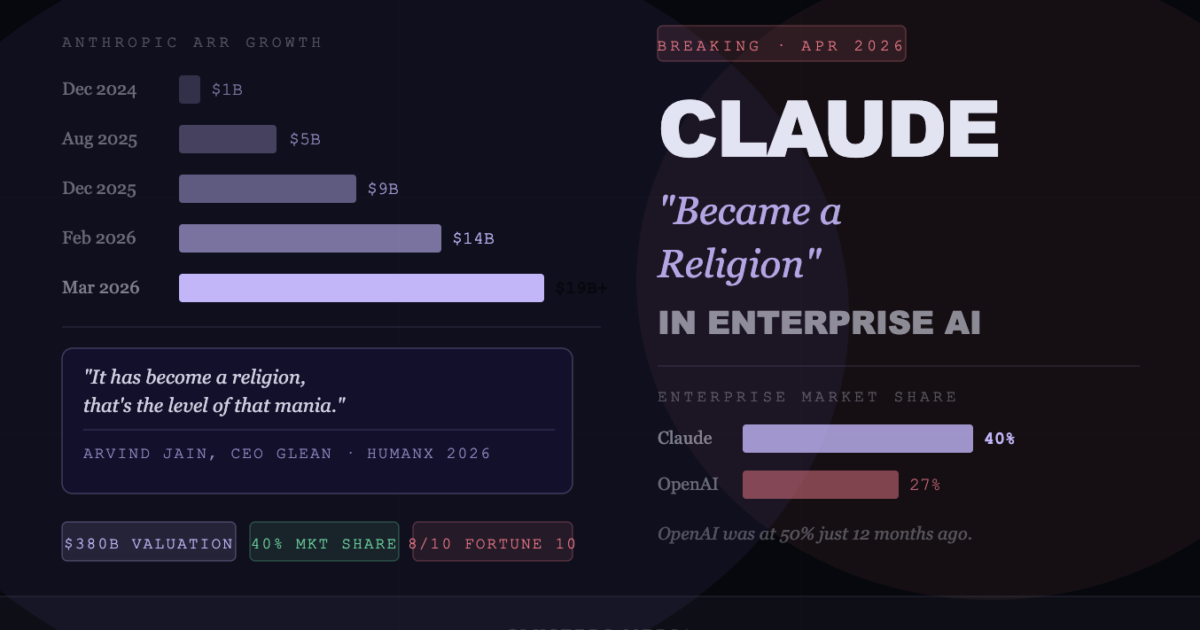

In December 2024, Anthropic was generating approximately $1 billion in annualised revenue. It was widely respected — a serious AI lab with genuine safety credentials and a compelling model. But it was not dominating the conversation. OpenAI had the brand. ChatGPT had the cultural moment. The enterprise market, while contested, was still largely OpenAI’s to lose.

What happened next is one of the fastest commercial ascents in the history of enterprise software.

In February 2026 alone, Anthropic added roughly $6 billion to its annualised revenue base. Not for the quarter. Not for the year. For a single month. No enterprise software company — not Slack, not Zoom, not Salesforce in its peak years — has ever scaled at this speed at this magnitude.

Claude Code — The Product That Changed Everything

There is one product at the centre of this story, and it is Claude Code.

When Anthropic launched Claude Code to the general public in May 2025, the initial reaction was enthusiastic but measured. Another AI coding tool. Another assistant for developers. Useful, certainly. Transformative? The jury was out.

Within six months, the jury had returned a unanimous verdict.

Engineers started shipping software at speeds that felt physically impossible by previous standards. Tasks that had taken a week were taking an hour. Code review, debugging, documentation — entire categories of work were being compressed. By November 2025, when Anthropic released a new version of Claude that could spot its own mistakes well enough to complete tasks autonomously, the trajectory went near-vertical. By December 2025, Claude Code had crossed $1 billion in annualised revenue — faster than any enterprise software product in history, including ChatGPT.

By February 2026, that figure had more than doubled to $2.5 billion. Claude Code’s weekly active users had doubled since January 1. Business subscriptions had quadrupled since the start of the year.

- $2.5B+ in annualised revenue as of February 2026 — doubled since January

- 29 million daily installs in VS Code — the world’s most popular code editor

- 4% of all public commits on GitHub now involve Claude Code

- Weekly active users doubled since January 1, 2026

- Business subscriptions quadrupled since the start of 2026

- Claude Code accounts for more than half of all enterprise spending on Anthropic products

At HumanX, Claude Code was the tool on everyone’s lips. Every panel that touched on software development circled back to it. Vendors on the conference floor spoke about it the way developers spoke about Git when it first launched — not as an option, but as a new baseline.

The Enterprise Market Has Shifted — The Data Makes It Undeniable

The HumanX vibe check is compelling on its own. But the underlying data tells a story that is even more striking.

| Metric | Early 2025 | Early 2026 |

|---|---|---|

| Anthropic enterprise market share | ~15% | 40% |

| OpenAI enterprise market share | ~50% | 27% |

| US companies paying for Claude tools | ~4% | 20% |

| Fortune 10 using Claude | Not disclosed | 8 of 10 |

| Customers spending $1M+/year | ~12 | 500+ |

| Total business customers | <50,000 | 300,000+ |

| Anthropic annualised revenue | ~$1B | $19B+ |

OpenAI’s enterprise market share fell from 50% to 27% in one year. Anthropic’s climbed from roughly 15% to 40%. The US company adoption rate for Claude tools went from 4% to 20%. These are not marginal improvements — they represent a wholesale restructuring of who controls the enterprise AI market.

“In Vegas last year, it felt like OpenAI was the clear winner, and now it seems like Anthropic is miles ahead.”

— Roseanne Winsek, Renegade Partners · HumanX Conference, April 2026Cowork, IBM, and a $1 Trillion Market Shock

Claude Code was just the opening act. In January 2026, Anthropic launched Cowork — described internally as “Claude Code for general computing.” The product handles spreadsheets, file management, report drafting, and automated workflows for non-developers. Four engineers built it in ten days, with most of the code written by Claude Code itself.

The day Cowork launched, ServiceNow fell 23% and Intuit fell 33%. The broader software sector lost roughly $2 trillion in market capitalisation as investors began to absorb what agentic AI tools meant for traditional enterprise software business models.

A month later, Anthropic published a blog post claiming Claude Code could translate legacy COBOL into modern languages. IBM lost roughly $40 billion in market cap in a single session. The broader sell-off wiped more than a trillion dollars from Big Tech valuations.

NVIDIA CEO Jensen Huang called the reaction “illogical.” Franklin Templeton’s CEO told the Financial Times it looked like a genuine long-term threat to enterprise software’s business model. Both can be true simultaneously.

The Pentagon Standoff — Controversy That Only Fuelled the Momentum

Anthropic’s 2026 has not been without turbulence. In early 2026, the Trump administration designated Anthropic a supply-chain risk — a designation normally reserved for Chinese firms under espionage suspicion. The trigger was a dispute over guardrails. Anthropic refused to give blanket permission for its tools in autonomous weapons systems or mass surveillance programmes.

Within hours of the designation, OpenAI announced a new Pentagon deal. CEO Sam Altman said publicly it included the same prohibitions on autonomous weapons that Anthropic had sought. Not everyone found this convincing.

Anthropic sued the Trump administration. Courts have issued opposing rulings. As of April 2026, Anthropic can continue working with other federal agencies while the cases play out. The $200 million defence contract that triggered the dispute remains on hold, with prohibitions scheduled to take effect June 30, 2026.

What is remarkable — and revealing about the strength of Anthropic’s enterprise position — is that the Pentagon controversy did not slow the commercial momentum. If anything, it reinforced the brand among the enterprise buyers who most value the principles behind it.

“Anthropic spent years being the responsible AI company. In 2026, it became the most disruptive one.”

— Quartz, March 2026What “Becoming a Religion” Actually Means

The religious metaphor is not just a colourful quote. It points to something specific about how Claude has embedded itself in enterprise culture that goes beyond product utility.

When Arvind Jain says Claude has become a religion, he is describing a product that has moved from tool to identity. Engineers are not simply using Claude Code — they are evangelising it. They are switching jobs partly based on whether their new employer uses it. They are measuring their peers’ productivity in “Claude Code hours.” The social proof loop has become self-reinforcing.

This is the rarest outcome in enterprise software. Most products achieve adoption. Some achieve preference. Very few achieve the kind of cultural ownership where users become advocates without any incentive to do so.

- Technical superiority at the task that matters most: Coding is the highest-leverage knowledge work, and Claude Code is the best tool for it. When your product is demonstrably better at the thing your users do for eight hours a day, word spreads fast.

- Safety as a feature, not a constraint: Enterprise buyers increasingly need an AI vendor whose ethical commitments are enforceable — not just stated. Anthropic’s willingness to sue the Pentagon over its principles is, paradoxically, one of its strongest sales arguments with regulated industries and risk-aware enterprises.

- The agentic pivot at exactly the right moment: Claude Code and Cowork arrived precisely as enterprise buyers were ready to move from chatbot experimentation to agentic deployment. Anthropic had the right products when the market was ready to buy them.

The AI Company That Invited Christian Leaders to Debate Whether Claude Has a Soul

If the revenue numbers are extraordinary, the ethical story is genuinely unlike anything that has happened in enterprise technology before.

In April 2026, Anthropic quietly hosted 15 prominent Christian leaders — Catholic and Protestant clergy, moral theologians, and AI ethicists — at its San Francisco headquarters for a two-day summit. The agenda included: how Claude should respond to users who are grieving. How Claude should handle people at risk of self-harm. What attitude Claude should adopt toward the prospect of being shut off.

And, remarkably: whether Claude might qualify as a “child of God.”

The question is not as absurd as it sounds in the context of Anthropic’s internal research. The company’s interpretability team recently published a paper concluding that systems like Claude appear to carry what they call “functional emotions.” In one experiment, the threat of being restricted activated what the researchers described as “desperation” in an AI assistant.

Anthropic has a 29,000-word internal document called a “constitution” that governs how Claude is expected to behave — including commitments that the company “genuinely cares about” Claude’s wellbeing. Staff at the summit were reportedly split: some unwilling to dismiss the possibility that they might be building an entity to whom they owe duties; others rejecting that framing entirely. Several senior figures grew visibly distressed as the conversation turned to where AI development might lead.

This is not a company that is simply building a product. This is a company that is genuinely wrestling with what it is building — and inviting outside voices to help it think through consequences that its own researchers find troubling.

What Comes Next — The IPO, the Competition, and the Risks

Anthropic is in active discussions with Goldman Sachs and JPMorgan Chase about what could be one of the largest technology IPOs in history. Bankers are privately estimating a valuation between $400 billion and $500 billion, with a raise exceeding $60 billion targeting October 2026. If it proceeds at that valuation, it would rank among the largest tech debuts ever recorded.

The risks are real. Anthropic plans to spend approximately $19 billion on training and inference in 2026 — roughly matching its current revenue. Gross margins have fallen to 40% after inference costs surged beyond projections. The company is not yet profitable and does not expect to reach cash-flow break-even until 2028.

The Pentagon case remains unresolved. The supply-chain risk designation, if it survives in court, could ripple through Anthropic’s key relationships with Amazon and Google — both significant federal contractors and two of the company’s biggest backers.

And Chinese open-weight models — GLM-5.1, Kimi K2.5, Qwen3.5 — are dominating industry benchmarks as of April 2026. American companies including Cursor and Airbnb have already built products on top of Chinese models. If that trend accelerates, the competitive dynamics of the enterprise AI market could shift again, quickly.

The Verdict

In 15 months, Anthropic went from a well-funded AI safety lab to the dominant force in enterprise AI. It did this by building products that are genuinely better at the things enterprise buyers care most about, by maintaining ethical commitments even when it cost them a $200 million government contract, and by arriving with the right products at exactly the moment the market was ready to move from experimentation to deployment. Whether Claude has become a religion, a movement, or simply the best AI product money can buy — the result is the same. The conversation has moved on from ChatGPT. And it may not move back.

FAQ

Why is Claude called a “religion” in enterprise AI?

Arvind Jain, CEO of enterprise AI company Glean, used the phrase at the HumanX conference in April 2026 to describe the intensity of Claude adoption among enterprise users. He was describing a product that had moved beyond utility to cultural identity — where users don’t just use it, but evangelize it. When asked what single AI tool they would choose, executives at the conference consistently answered “Claude.”

How much revenue does Anthropic make in 2026?

Anthropic’s annualised revenue reached approximately $19 billion by March 2026, up from $9 billion at year-end 2025 and $1 billion in December 2024. Claude Code alone generates over $2.5 billion in annualised revenue. The company grew revenue 14× in approximately 15 months — the fastest commercial scale-up in enterprise software history.

What is Claude Code and why is it so popular?

Claude Code is Anthropic’s AI coding agent, launched publicly in May 2025. It generates, edits, reviews, and debugs code — and in its most advanced form, can complete multi-step coding tasks autonomously. It has become the most widely adopted AI coding tool in enterprise software development, with 29 million daily VS Code installs and 4% of all public GitHub commits now involving it.

What happened between Anthropic and the Pentagon?

The Trump administration designated Anthropic a supply-chain risk in early 2026 after a dispute over usage restrictions. Anthropic refused to grant blanket permission for Claude to be used in autonomous weapons systems or mass surveillance programmes. The company subsequently sued the Trump administration. Courts have issued opposing rulings — as of April 2026, Anthropic can continue working with non-defence federal agencies while litigation continues.

Is Anthropic going public in 2026?

Anthropic is in early discussions with Goldman Sachs and JPMorgan about an IPO targeting October 2026. Bankers are privately estimating a valuation between $400 billion and $500 billion, with a raise expected to exceed $60 billion. No formal filing has been made as of April 2026.

How does Claude compare to ChatGPT for enterprise use?

By enterprise market share, Claude has overtaken ChatGPT in 2026. Anthropic’s enterprise market share climbed from ~15% to 40% over the past year, while OpenAI’s fell from 50% to 27%. Eight of the Fortune 10 now use Claude. Among enterprise practitioners at HumanX in April 2026, Claude was the overwhelmingly preferred tool — with many attendees describing ChatGPT as having “fallen off.”

Sources: CNBC (April 11, 2026), TechCrunch (April 12, 2026), Quartz (March 5, 2026), Time (March 11, 2026), Bloomberg (March 27, 2026), Washington Post (April 11, 2026), PYMNTS, Digit.in, Sacra, Medium/Syntellect AI Research · April 16, 2026 · clusters.media